Cloud contact centers must achieve high scalability and reliability and be cost-efficient for customers and providers.

The underlying architecture must be well-designed to meet these goals and take advantage of modern development techniques, which were only partially available when many current CCaaS platforms were conceived.

Because of its real-time requirements – more on that below – the voice channel is the one that poses the most difficult challenges. This is not to say that building an excellent chat solution is entirely straightforward, but voice simple has more inherent complexity. This complexity creates opportunities for differentiation and, if poorly understood, can lead to underperforming solutions.

Historically, CCaaS solutions were built by taking an on-premise voice processing system, also known as private branch exchange (PBX), associated with an automatic call distributor (ACD) and making it available in a cloud model. The characteristics of a cloud model, as seen by the customer, are that the provider entirely manages it, is highly available, is flexible in terms of provisioning, and is always functionally up to date. Behind the scenes, how the provider delivers these characteristics varies, with various levels of automation and cost. For example, it is well known that even some prominent players provide multitenancy by manually instantiating a whole system for every customer; this is inefficient and poses a profitability problem over time.

The cost comes from resource consumption (CPU, memory, bandwidth, storage) and management complexity. A good design will optimize these variables and cater to the most demanding scenarios, such as the ones encountered by BPOs, where a large number of calls must be routed back and forth across the globe with low latency to preserve quality.

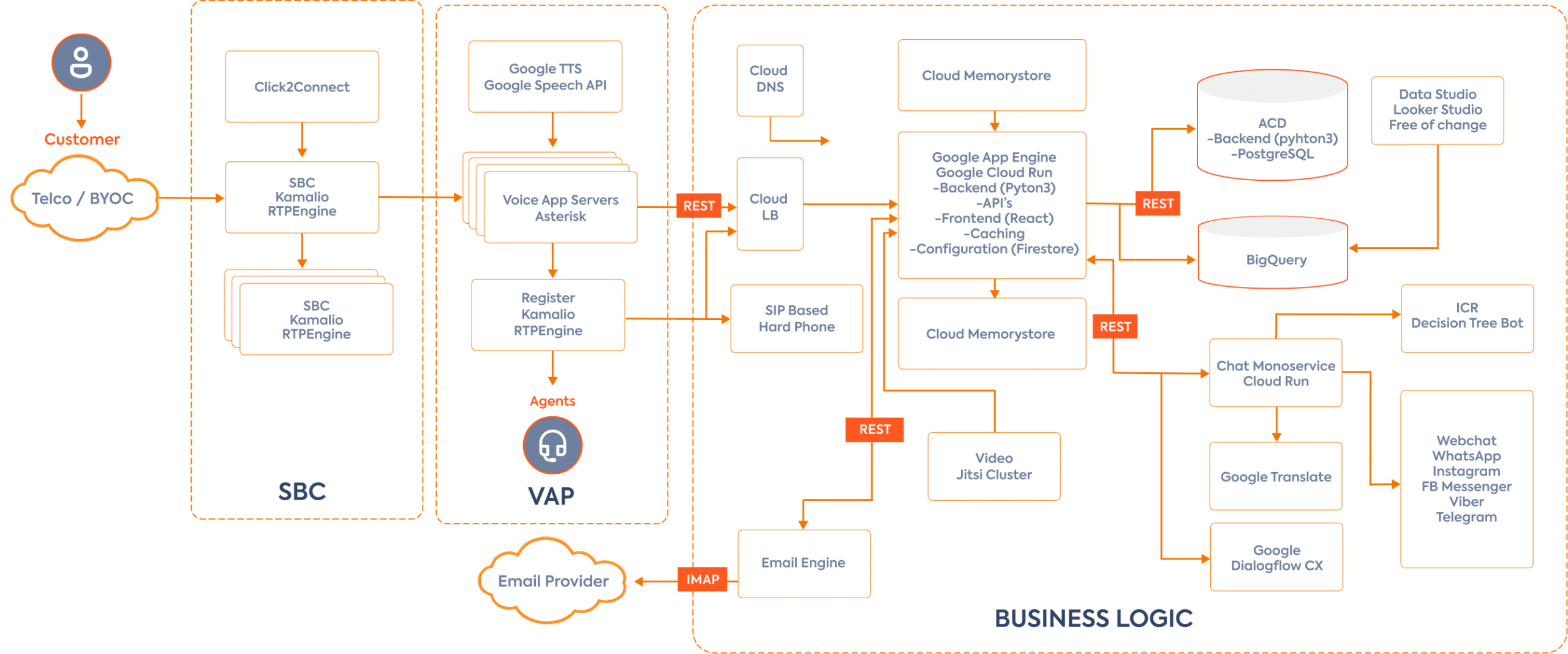

At CCS, we started by asking ourselves how to build the ultimate voice-processing infrastructure to meet the most demanding customer requirements to beat competitors large and small on performance, cost, and automation. The resulting approach followed a few principles: break down the call flow into logical steps, decouple these steps as much as possible, ensure high scalability for each step, and ensure super-fast connectivity between the components.

Networking and connectivity

The Google Cloud network enables this superfast connectivity. As every millisecond counts, it is worth remembering that for a BPO or large enterprise contact center taking calls from Phoenix in Arizona, with agents in Gurgaon in India or Manila in the Philippines, data packets must travel 13,000km one way, leading to about 60 milliseconds of latency (the speed of light is slower in fiber than it is in a vacuum). This is still well within budget as 150 milliseconds is generally considered quite acceptable for voice, but you do not want to add unnecessary delay. Selecting and configuring the right services is primordial to achieve the desired level of networking performance. One of the most crucial networking performance parameters is the number of CPUs – the more CPUs, the better the networking performance. The options were private MPLS, which is expensive and difficult to manage as you need to build colocation, establish carrier agreements and monitoring tools and staff, or a low-latency public cloud. We selected Google Cloud because it offers the best performance and robust manageability.

Once the connectivity layer is available, there are three steps that a call flow goes through:

- Call ingress

- Call routing or business logic application

- Call egress to the agent

We optimized all these steps using load balancing, auto-scaling, separation of media, call signaling and customer data separation, and bare metal machines for optimal performance.

Call Ingress

Call ingress is processed by a session border controller (SBC), which accepts the call from a SIP trunk or gateway and sends a sip invite to a voice application server VAP). Rather than statically configuring a SBC to a specific VAP or set of VAPs, we implemented an intelligent load balancing function in the SBC, including techniques such as round-robin or weighted round-robin. Once the VAP is selected, the call routing can take place.

SBCs are deployed at the network’s edge as close as possible to the customer or carrier to minimize latency.

VAPs are usually deployed in the same network zone as the SBC and are stateless machines running on bare metal to optimize the performance of call routing. In this context, stateless means that the VAP deals with sip messages and the media connection and requests directions on what to do with the call from the business logic application via a REST API.

This ensures that the call signaling step can be scaled independently of the business logic, and this decoupling permits high performance, irrespective of the complexity of the business logic. In most competitive designs, call signaling and business logic are blended into a single application, leading to less flexibility, more resource consumption, and ultimately less performance.

A second level of load balancing exists between the VAPs and the business logic application, itself autoscaling and distributed.

Application

The microservices-based application relies on the Google Cloud Platform for virtual machines, databases, caching, messaging bus, and analytics.

The multi-tenancy model CCS selected is a single multi-tenant application and a single multi-tenant database for efficiency and development simplicity. Row-level security and DNS subdomain-based access guarantee tenant isolation. When data residency is required, a whole instance of the environment is deployed in the appropriate region or zone.

Customer simplicity was also a design objective for the application, enabling customers to get to production faster than other solutions. Very importantly, we thrived to deliver a simple yet powerful reporting experience.

Especially in the BPO world, Avaya’s CMS has shaped contact center reporting, and many customers have organized their teams and processes around it. The CCS reporting architecture revolves around Google’s BigQuery, which allows for the buildup of the data warehouse in real time, and Looker as a data analysis interface. Real-time dashboards are refreshed within 1 or 2 seconds of an event (a new call in a queue, for example).

The application stores all the events with no loss of information (to make retrieval faster, some events are also aggregated), and the flexibility of the UI makes it straightforward to replicate a CMS report if required by the customer.

Call egress

We use mostly Webrtc for connecting the agents, and hard sip phones are also supported. The VAP sends the call to distributed registrars at the network’s edge close to the agent site. Because of the speed of the Google network, latency between the VAP and the agent is minimal.

The CCS architecture is designed for scalability and performance. This ensures that CCS can support very large, distributed contact centers and that the operating cost is optimized. This allows for a very high gross margin, as the infrastructure cost is contained – currently at 13% of revenue, which is best in class.

In a scenario where the company would attain $100M ARR, conservatively assuming $50 per agent per month for voice, the number of agents that the platform must support is 170,000. The architecture can grow to this level by horizontal scaling and evolutionary changes without major rework (an example of such an evolution would be to shard the customer database that includes configuration and routing rules or to shard reporting data).